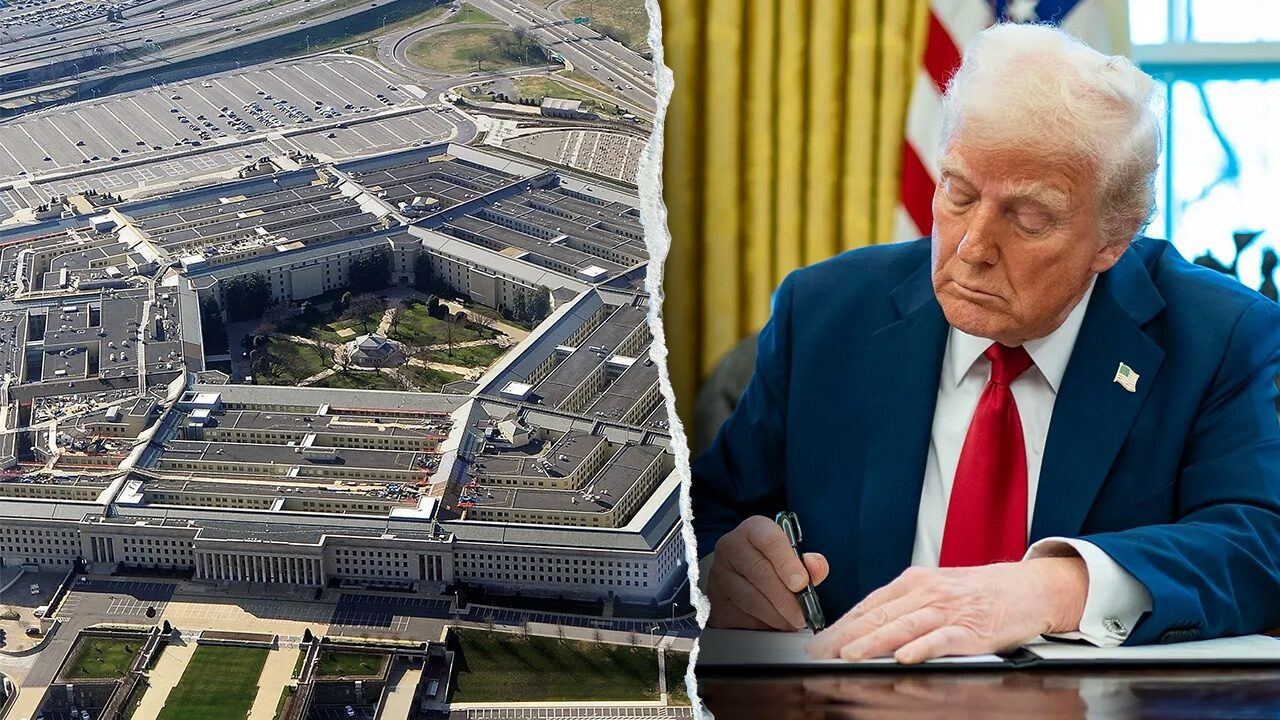

Pentagon Utilizes Anthropic AI for Iran Strikes Despite Trump’s Federal Ban

Anthropic AI’s advancements in Iran progressed even in light of President Donald Trump’s executive order prohibiting the software’s use in federal operations.

Recent reports indicate that the United States Central Command (CENTCOM) employed the Claude AI system, created by Anthropic, to carry out air strikes in Iran. This deployment took place in the brief period right after Donald Trump’s explicit directive for federal agencies to sever all connections with the tech firm.

The Wall Street Journal reports that defense officials utilized AI to analyze intelligence data and pinpoint specific targets. The software was utilized to conduct intricate battle simulations, providing commanders with a digital preview of the mission prior to deployment on the ground or in the air.

CENTCOM officials refrained from commenting when inquired about the particular digital tools utilized in the current engagement with Iran. Nonetheless, the timing underscores a significant gap between the administration’s policy and the tactical situation on the front lines.

The controversy arises from a recent directive in which Trump instructed federal agencies to “immediately cease” any engagement with Anthropic. Trump has designated the company as a national security threat, instructing the Pentagon to regard them as a “supply chain risk.” This designation initiates a six-month timeline to remove the software from all government servers.

Despite the ongoing political tensions, Anthropic’s Claude AI has a rich history with the U.S. intelligence community.

The model was approved for classified environments quite some time ago, thanks to strategic partnerships with Palantir and Amazon Web Services. It indeed played a subtle yet essential role in the January operation in Caracas that resulted in the capture of Nicolás Maduro.

Anthropic joins a select group of AI laboratories, including OpenAI and Google, who hold substantial Pentagon contracts valued at approximately $200 million. Forfeiting this status would significantly impact both finances and reputation.

In light of the abrupt ban, Anthropic issued a statement describing the “supply chain risk” designation as an “unprecedented action” typically reserved for the nation’s actual adversaries. The company has stated their intention to contest the order in court, emphasizing their longstanding partnership on classified networks since mid-2024 and their commitment to maintaining their military support roles.